What does it say about a technology when even its biggest critics start supporting it?

Many CFOs put a stop to AI productivity platforms two years ago. Too expensive, too unproven. Now those same CFOs are asking why deployment is behind schedule. That kind of shift does not happen because of marketing. It happens because the results showed up.

Teams got leaner. Revenue targets did not. Companies that kept solving output problems by adding headcount found the math stopped working.

Hiring got expensive, turnover got unpredictable, and growth expectations kept climbing. AI tools moved off the exploratory roadmap and into the core budget because the pressure to perform left fewer alternatives on the table.

Hiring More People Stopped Solving the Problem

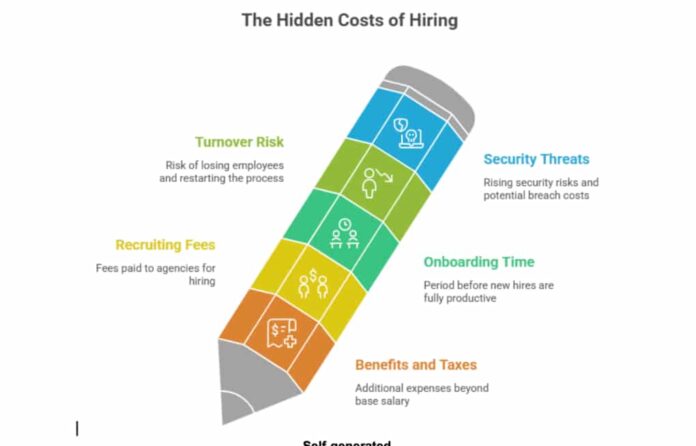

Running a company got expensive in ways that are easy to underestimate. Salaries are just the start. A lot of organizations learned this the hard way after the aggressive hiring periods of 2021 and 2022, followed by painful corrections in 2023.

And it is not just workforce costs putting pressure on operations; security threats are rising too. Leaders who fail to recognize and avoid phishing attacks often find themselves absorbing breach costs on top of everything else.

The true cost of a single hire goes far beyond the offer letter:

- Benefits packages and payroll taxes that stack on top of the base salary

- Recruiting fees that can run 15 to 20 percent of an annual compensation

- Three to six months of onboarding before a new hire is fully contributing

- Turnover risk that resets the entire cycle all over again

Self-generated

So, How Does a Team of Eight Do What Twelve Used to Do?

That became the real question in a lot of operations meetings. For leaders sitting with that gap, AI tools started looking less like a tech experiment and more like a practical answer to a workforce math problem.

A platform that removes four hours of daily manual work per person carries a cost that looks completely different from the true cost of a full-time hire. Once leadership stopped treating AI as a technology decision and started treating it as a capacity decision, the budget conversation changed entirely.

What Happens When Companies Invest in AI and Productivity Tracking Tools

There is a tendency to talk about AI deployment in grand and abstract terms. The reality inside most organizations is considerably less dramatic.

- Customer support tickets get triaged and drafted before a human reviews them

- Phone-based interactions that once required a full support rep are now handled around the clock by an AI voice agent

- These AI agents minimize wait times without adding headcount

- They help teams focus on complex issues while ensuring every interaction stays aligned with defined processes and quality standards

- Weekly performance reports get pulled from raw data without anyone spending half a day reformatting spreadsheets

- Contract review catches the same standard clauses every legal team looks for anyway

- Job postings get written and updated without a back-and-forth that stretches across a week

None of it sounds revolutionary. All of it saves hours that used to come out of real people’s schedules every single week.

Productivity tracking tools operate differently but work toward a similar goal. The purpose is not to generate output through AI. It is to give managers genuine visibility into where work slows down, not where they assume it slows down based on gut feeling and status updates.

| What Companies Are Investing In | What They Report Getting Back | Typical Timeline to ROI |

| AI Automation Platforms | 30 to 40 percent fewer hours on manual tasks | 6 to 12 months |

| Productivity Analytics Tools | Projects are delivered on time more often | 3 to 6 months |

| AI for Hiring and Onboarding | New hires are productive about 35 percent sooner | 1 to 3 months |

| Workforce Intelligence Software | Lower turnover, burnout caught earlier | 9 to 18 months |

These numbers are not from vendor pitch decks. They are showing up in post-implementation reviews from companies that have been running these systems for over a year.

The Visibility Gap That Most Managers Do Not Talk About

Most managers work from incomplete information. Standups, Slack check-ins, and weekly status updates. These items provide a broad sense of direction, but they don’t reveal areas where a process has been subtly disrupted for six months.

A process that should take two days but often takes nine is rarely treated as a problem.

It just becomes the way things work. Until a productivity tool surfaces the pattern and somebody asks why it takes nine days.

That is the core value of these platforms when implemented well. Not watching individual employees, but identifying friction points across processes that nobody inside the system can easily see from where they sit.

AI adds another layer on top of that. Instead of historical data just telling what already happened, it starts pointing at what is likely to come. A project heading toward a missed deadline starts showing signals weeks in advance, and the platform surfaces that while there is still room to course-correct. That is a meaningfully different way to run project oversight compared to finding out something went wrong after it already did.

Self-generated

The Surveillance Concern Is Legitimate and Worth Taking Seriously

Tracking Tools Have a Trust Problem, and Some of It Is Deserved

Productivity tracking tools have earned some skepticism. There are real documented cases of companies using monitoring software in ways that cross a clear line:

- Tracking how long employees spend away from their desks

- Capturing screenshots every few minutes throughout the workday

- Logging keystrokes to verify remote workers are actively typing

- Monitoring bathroom break duration as a performance metric

That is not productivity management. That is distrust converted into software.

Companies that use these tools well operate with a completely different mindset. The focus goes on workflows and systems, not on individual behavior.

Here is what responsible implementation looks like:

- Teams are told upfront what data gets collected and why

- Insights are used to redistribute workload fairly across a team

- Data drives decisions about removing slow or broken processes

- Individual performance cases are never built from tracking data alone

That difference in approach changes everything about how employees respond. Nobody objects to a system that catches when their workload has gone past a reasonable level. People push back, often loudly, against one that feels like being watched.

Organizations that draw that line clearly tend to see adoption happen with little friction. The ones that do not end up with a trust problem that is significantly harder to fix than the operational problem they were trying to solve in the first place.

The Sectors That Have Moved Past the Pilot Stage

Some industries have stopped evaluating these tools and are now implementing them. In other words, they are no longer considered experiments in several sectors.

- Tech companies got there first. Their teams were already fully inside digital workflows, so adding AI capability was a natural extension of how work was already happening. Platforms like Rocket.new are a clear example of how quickly the builder ecosystem is raising the bar and giving technical teams tools that compress weeks of development work into hours.

- Financial services moved quickly for a specific reason: compliance. Reducing manual errors in regulatory reporting and shortening audit timelines justify the investment on risk management grounds before the efficiency gains are even factored in.

- Healthcare, specifically on the administrative side, is using these tools to handle complex staffing schedules and pull documentation tasks away from clinical staff who should be focused on patient care instead.

- Logistics operations cannot afford delays. Real-time AI tracking for shipment routing and workforce performance in distribution centers has become a standard operational tool in that industry.

- Professional services firms in accounting, legal, and consulting are using AI to handle proposal generation, client reporting, and time tracking work that used to consume junior staff time. This gives senior staff more capacity for the advisory work clients are paying for.

What connects all of these is that they operate in environments where inefficiency has a direct and measurable cost. Those industries moved fastest because the business case was clearest.

The Real Cost of Waiting

Companies that have not moved yet tend to describe their position as prudent. In 2021, that framing held up. In 2026, the gap between early adopters and holdouts is showing up in operational results.

| Where the Gap Is Visible | Without AI and Tracking Tools | With AI and Tracking Tools |

| Project Delivery | Around 42 percent hit delays of two weeks or longer | Drops to roughly 18 percent |

| Burnout on Teams | Higher on manual-heavy workflows | Lower where repetitive work is automated |

| Internal Decision Speed | Slower because reporting cycles add lag | Faster because data surfaces on its own |

| Competitive Ground | Eroding as peers build operational capacity | Held through consistent investment |

The companies that started building these systems 18 to 24 months ago are running faster right now. Their project margins are more stable. Their sales cycles are shorter. Similarly, their best people have more bandwidth for work that requires judgment.

Catching up to that kind of operational advantage takes time, even after a company finally commits to adopting it. The window for getting ahead of this is still open. But it is not as wide as it was.

What the Companies Seeing Results Are Doing

Getting access to the tools is the simple part. Getting real business value out of them is where most implementations either succeed or stall out.

- Organizations with strong outcomes started with a specific problem

- Not “we want to be more efficient” but “we want to cut approval time on budget requests from 11 days to 4 days.”

- This kind of specificity creates a measurable target

- Without it, nobody knows whether the investment is working

Employees got involved early in the process, not just notified after decisions were made. The people doing the work day to day usually know where the friction is. Bringing them into the tool selection and rollout turns potential skeptics into people who want the thing to succeed.

Goals were tied to deadlines. Ownership was assigned. When early results fell short, there was a process for adjusting course rather than abandoning the effort.

Leadership behavior matters more than most companies account for. When executives reference the data in meetings and visibly let it inform their decisions, that signals to everyone else that this is a real operational priority.

When the data only gets checked in a monthly review nobody attends, the message is the opposite.

Self-generated

Where Things Stand Heading Into 2027

The capability of these tools has outrun the pace of adoption by a wide margin. Most companies are using a fraction of what is available to them, but that gap is starting to close fast.

CFOs who were skeptical 24 months ago are now asking their operations teams why rollout has moved so slowly.

The companies that move with clear goals, real employee involvement, and leadership that uses the data are building something that holds value regardless of what the next round of AI tooling looks like. The ones still waiting for the right moment may find the market has already decided for them.

The shift is happening with or without you. The only question is whether your organization is a part of it or catching up to it.

For more insights on business technology and workplace trends, visit netnewsledger.com